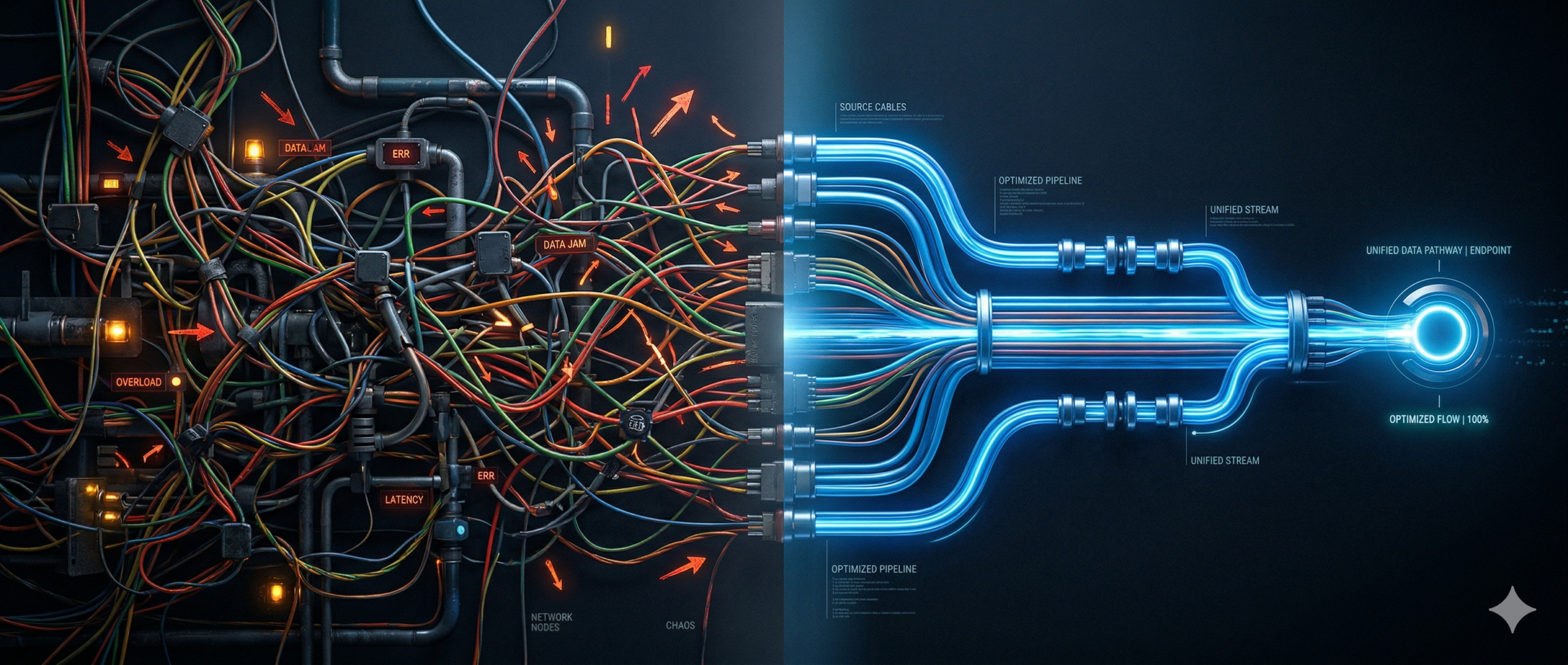

In the realm of modern software development, artificial intelligence (AI) has become a central player. Teams in various industries are increasingly turning to AI to address product and workflow challenges within their software. However, the process of building production systems with AI remains complex. It is not the AI model itself that poses the greatest challenge, but rather the intricate web of components surrounding it. This complexity often manifests as a “glue-code” problem, where storage, compute, orchestration, networking, authentication, and inference are spread across different systems with varying operating models.

The more these components intersect, the more developers are required to shift their focus from crafting product logic to connecting these services together. An integrated platform model can alleviate this burden by streamlining the deployment and operation of AI applications in today’s cloud landscape. By comparing two scenarios – a neocloud combined with a hyperscaler versus a vertically integrated cloud stack – we can highlight the efficiency advantages offered by the integrated model.

While the initial costs of these approaches may seem similar on the surface, the integrated model proves to be more efficient by reducing the time developers spend on writing glue code and managing the challenges that arise as AI products scale. The future of AI platforms lies in vertical integration rather than an abundance of tools. Platforms that consolidate compute, storage, and inference functions help to minimize friction, expedite development, and empower smaller teams to build and scale AI applications more effectively.

When deploying AI applications, it becomes evident that they rely on much more than just inference. Workflows encompass various elements such as object storage, computation, model endpoints, persistence, and monitoring, each demanding its own operational expertise. Unfortunately, these components are typically not seamlessly interconnected. The prevailing cloud environment consists of siloed products, resources, and services that are only able to communicate with each other through APIs. Consequently, developers spend a significant amount of time setting up and maintaining these connections, which can be laborious.

In a setup involving a neocloud like Baseten or Fireworks.AI paired with a hyperscaler like AWS, the neocloud hosts the AI model while the hyperscaler manages the surrounding application or workflow. For example, an application processing user-uploaded documents stored in a service like S3 and utilizing a Language Model (LLM) to summarize them may require the developer to handle authentication via API keys instead of shared cloud identity primitives. In this scenario, a file upload could trigger a Lambda function through an S3 event in AWS. The Lambda function would then download the file, convert its contents into a JSON prompt format, and communicate with the neocloud’s model endpoint via an API key through an HTTP request. The model’s response would be parsed by the Lambda function and written back to S3 or a database.

However, if the model is scaled down to zero, the request may experience additional latency. When processing large batches, developers must implement their own batching, retries, and error handling. None of this pipeline is managed natively by the neocloud, necessitating the developer to orchestrate every step between AWS services and the external inference layer. This orchestration, although essential, is not the product’s distinguishing factor, yet it must be developed and maintained. As a result, developer time and computing resources are consumed in connecting services and maintaining the code that bridges them, consequently raising costs.

At scale, the operational implications become more apparent. Scaling becomes increasingly challenging as each custom handoff introduces another potential failure point that teams must monitor and maintain. With growing usage, elements such as queues, retries, timeouts, and concurrency limits come under strain, requiring teams to allocate more time to stabilize the system. Additionally, networking complexities intensify when hosting an LLM that needs to be exposed through a public API endpoint. This transforms the model into an external Software as a Service (SaaS) dependency, raising concerns about security, egress, latency, and network boundaries.

Moreover, data pipelines remain disjointed without native connections to services like AWS’s S3 or Step Functions. Consequently, developers must manually move and reshape data, investing engineering resources in ensuring reliable connections between services. Batch and event-driven workflows may necessitate additional orchestration layers. In practice, these fragmented costs make spending difficult to track, especially when different billing models are employed across providers. Forecasting and debugging cost spikes become more challenging when usage spans multiple systems, often compelling companies to allocate entire teams to manage a fragmented stack.

A typical AI pipeline may involve 5-10 integration points, each introducing latency, failure risks, and engineering overhead. At scale, teams may need to assign developers or entire teams to maintain these connections, highlighting the hidden cost of glue code. As inference scales up, transitioning from serverless to dedicated providers becomes essential. Serverless inference, while suitable for low or unpredictable traffic, may become less efficient at higher volumes due to factors such as cold starts, concurrency limits, variable latency, and per-request pricing. Dedicated providers offer reserved capacity, consistent performance, and greater optimization potential for GPU utilization, particularly when inference becomes a core component of the product.

Managing multi-cloud AI systems demands specialized expertise to navigate failures, retries, networking challenges, and inconsistencies across services. Building and retaining teams capable of operating these systems proves to be expensive and challenging. In many instances, the cost of maintaining the system surpasses the cost of running it.

An ideal AI cloud platform should not only host models but also unify compute, storage, networking, inference, and persistence under a single umbrella. This entails ensuring that authentication, permissions, deployment, logging, and cost visibility exhibit consistent behavior throughout the stack. Common workflow steps should feel like built-in platform features rather than costly custom integrations. Vertical integration is not merely a buzzword but a genuine advantage in enhancing the developer experience.

DigitalOcean presents a practical alternative to the fragmented model by reducing the number of seams developers need to manage. Rather than relying on a neocloud for inference and a hyperscaler for other functions, DigitalOcean integrates compute, storage, and AI inference within a unified platform model. While this does not eliminate every dependency, it significantly alleviates the integration burden associated with modern AI development.

By deploying the same architecture on DigitalOcean, with fewer moving parts, a more streamlined process ensues. For instance, a file landing in Spaces can trigger an application or workflow to detect the new object, invoke a Function to read it, convert its contents into a JSON payload, and send an authenticated HTTP request to a Gradient AI Platform Serverless Inference endpoint using a model access key. Once the model responds, the application can store the result in a Managed Database or write it back to Spaces. This reduction in integration points results in fewer failure modes, lower latency, clearer cost visibility, and reduced operational overhead, allowing smaller teams to build and scale AI applications without allocating engineers to maintain glue code.

The advantage offered by DigitalOcean goes beyond mere convenience; it provides leverage. By minimizing the need for glue code, DigitalOcean empowers developers to concentrate on creating intelligent applications instead of intricately connecting infrastructure components. In a landscape where complexity reigns supreme, reducing seams emerges as a competitive edge.

To validate this approach, a demo was built and deployed entirely on DigitalOcean, showcasing the cost of running the application at scale on an integrated platform versus a split stack utilizing a hyperscaler for infrastructure and a neocloud for inference. The comparison revealed that once the model comparison is standardized around GPT OSS 120B, both platforms exhibit similar costs in infrastructure and inference alone. While the public rate cards may suggest a narrow spread between the two at each volume tier, the real economic difference lies in labor costs.

Considering a scenario where a company needs to hire software engineers to develop and maintain integrations in a neocloud-plus-hyperscaler stack, the added labor expenses can offset the apparent infrastructure savings. As systems grow more complex, the cost of maintaining the integrations becomes a significant expense. A multi-provider stack introduces a glue-code problem, requiring extensive engineering effort to build, monitor, and evolve the connective tissue spanning different vendors. Conversely, running the same pattern on a single integrated platform like DigitalOcean reduces the cross-cloud orchestration burden, streamlining operations and lowering the long-term labor cost of managing the system.

In conclusion, the AI industry has made significant strides in making models more accessible, yet the challenge lies in building and maintaining production systems around these models. The future of platform differentiation hinges on minimizing the number of seams developers must navigate to keep a system operational. The teams that succeed in scaling AI swiftly will be those who dedicate minimal engineering effort to plumbing and choose infrastructure that facilitates the transition from serverless to dedicated capacity without requiring a complete rebuild. Ultimately, the winning platform may not be the one with the most capabilities, but the one that demands the least effort to utilize those capabilities effectively.

For more Information, Refer to this article.