Artificial intelligence (AI) has become a central component of modern software development, with teams from various industries turning to AI to solve product and workflow challenges. However, the process of building and deploying AI production systems remains complex, with integration being a significant hurdle. The real challenge in deploying AI applications lies not in the model itself, but in the surrounding infrastructure.

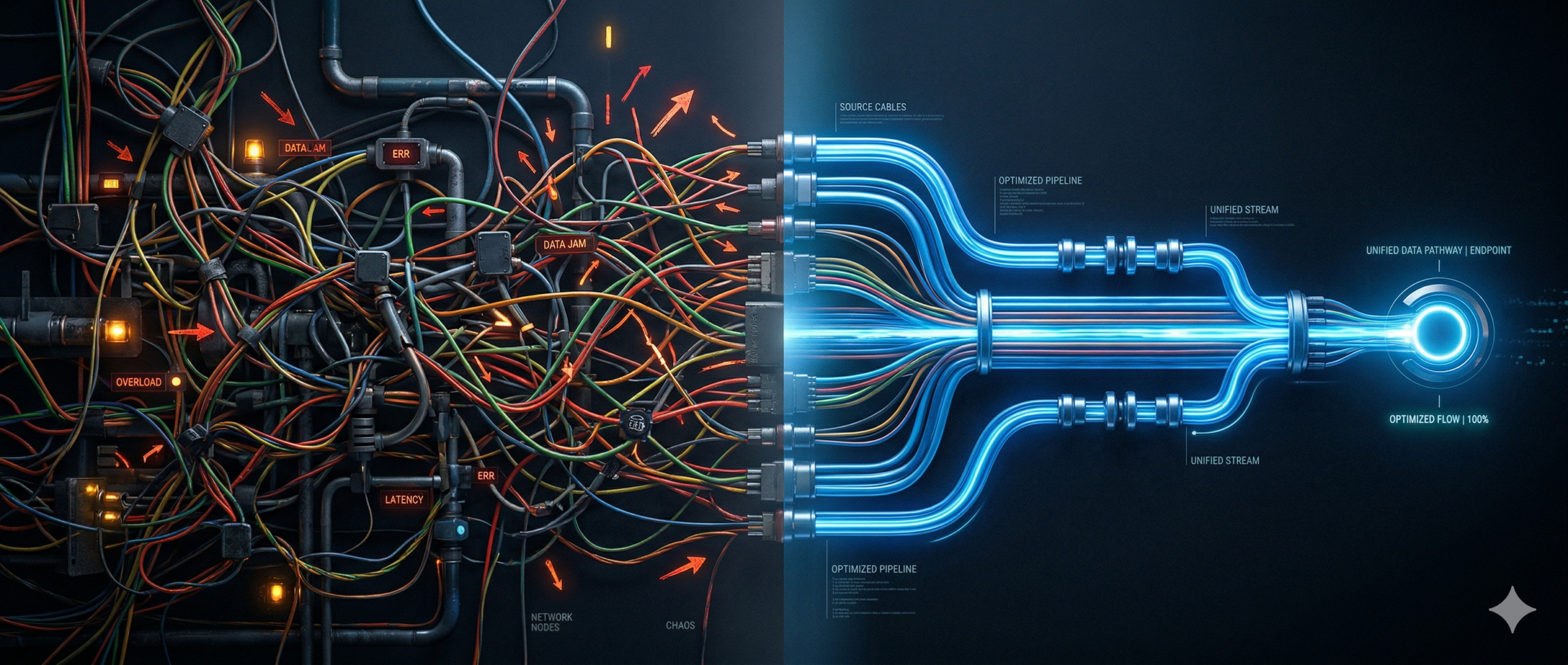

The complexity arises when storage, compute, orchestration, networking, authentication, and inference are spread across different systems with varying operating models. This fragmented approach leads to developers spending more time on writing glue code to connect these disparate systems, rather than focusing on building product features. As a result, the effort shifts from product logic to managing the integration of various services.

To address these challenges, a more integrated platform model is needed to streamline the deployment and operation of AI applications in the cloud. By comparing two landscapes – a neocloud combined with a hyperscaler versus a vertically integrated cloud stack – we can see the advantages of an integrated model in terms of efficiency and reduced development time.

In the current cloud environment, AI applications require expertise in various areas such as object storage, compute, model endpoints, and monitoring. These components are often not natively connected, leading to developers spending valuable time setting up and maintaining these connections. Bridging the gaps between services and products across different clouds requires manual work and adds to the complexity of scaling, securing, and debugging AI systems.

For example, in a setup where a neocloud hosts the model and a hyperscaler orchestrates the application workflow, developers must manage authentication via API keys and manually orchestrate each step in the workflow. This manual process increases the operational overhead and directly impacts the cost of development and deployment.

As AI systems scale, the operational challenges become more pronounced. Developers must monitor and maintain multiple failure points introduced by custom handoffs, queues, retries, timeouts, and concurrency limits. Additionally, hosting a Language Model (LLM) often requires exposing it through a public API endpoint, raising concerns around security, latency, and network boundaries.

The lack of integrated data pipelines further complicates the process, as developers must move and reshape data between services themselves. This results in increased engineering time spent on data management and ensuring reliable connections between services. The fragmented nature of multi-cloud AI systems makes it challenging to track spending, forecast costs, and debug issues that may arise across multiple systems.

To address these challenges, a vertically integrated cloud platform like DigitalOcean offers a practical solution by reducing the number of integration points and simplifying the deployment process. By unifying compute, storage, and AI inference under one platform, DigitalOcean minimizes the integration burden and allows smaller teams to build and scale AI applications more efficiently.

By deploying a demo application entirely on DigitalOcean, we can see the cost benefits of running an integrated platform compared to a split stack using a hyperscaler for infrastructure and a neocloud for inference. The reduced number of integration points on DigitalOcean means fewer failure modes, lower latency, clearer cost visibility, and reduced operational overhead for developers.

While the infrastructure and model costs may appear similar between an integrated platform and a multi-provider stack, the real economic difference lies in labor costs. Maintaining cross-cloud integrations in a neocloud-plus-hyperscaler setup can significantly increase the engineering effort required to keep the system running. This added labor cost can negate the apparent infrastructure savings and compound as the system grows in complexity.

In conclusion, the future of AI platforms lies in reducing the number of seams developers have to manage and streamlining the integration process. The winning platform will not necessarily be the one with the most capabilities, but the one that requires the least effort to use them. By choosing an integrated platform like DigitalOcean, developers can focus on building intelligent applications rather than managing infrastructure complexities, ultimately leading to more efficient AI development and deployment processes.

For more Information, Refer to this article.