Artificial Intelligence (AI) is rapidly transforming the way organizations operate across various industries. From simple chatbots to advanced AI agents that can interact with tools and data autonomously, the landscape of enterprise AI is evolving at a fast pace. According to Microsoft’s 2025 Work Trend Index Annual Report, a significant percentage of leaders expect AI agents to be integrated into their strategies within the next 12 to 18 months. Additionally, 24% of organizations have already deployed AI across their operations.

As AI adoption accelerates, the underlying infrastructure supporting AI workloads is becoming increasingly complex. The 2025 HashiCorp Cloud Complexity Report reveals that 97% of organizations utilize multiple tools or services to manage cloud environments, with 73% indicating that platform engineering and security are not operating as a unified function. This complexity poses new challenges as organizations embrace AI technologies.

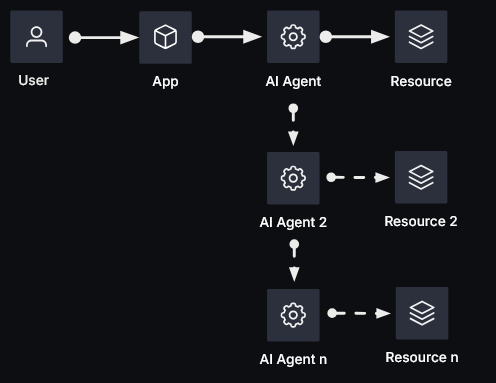

Traditional identity and access management (IAM) systems were designed with a human-centric approach, focusing on predictable patterns and behaviors. However, AI agents operate differently, with the ability to autonomously interact with various tools and systems, making security a critical concern. Agents can invoke other agents, leading to a dynamic and ever-changing environment where traditional IAM models struggle to provide adequate security.

Gartner reports that machine-to-human identities are growing at a ratio of 45:1, highlighting the scale at which organizations are deploying agentic workloads. Each new agent introduces a unique identity and set of credential paths, expanding policy boundaries and increasing audit requirements across the enterprise. To scale agentic AI adoption effectively, organizations must establish a robust security and operational foundation to mitigate risks.

There are four common critical risk areas within AI workflows that organizations need to address:

1. Overprivilege without visibility: Agents often accumulate excessive access, creating a large blast radius if compromised.

2. Lack of real-time enforcement: Policies must be enforced at the point of action to prevent unauthorized activities.

3. Impersonation and invisible delegation: Explicit delegation with consent should be employed to maintain audit trails and accountability.

4. Zero accountability: Without unique agent identities and detailed logging, security-related questions become challenging to answer, compromising compliance.

The urgency for organizations to establish agentic runtime security strategies is underscored by the IBM 2025 Cost of a Data Breach Report, which reveals the substantial financial impact of security incidents related to AI. Regulatory pressures, operational sprawl, and the growing threat of agent compromise further emphasize the need for immediate action to secure AI workflows.

To implement agentic AI successfully, organizations should follow five key imperatives:

1. Register every agent with a unique identity to establish accountability.

2. Strip standing privileges and implement least privilege access controls.

3. Tie actions to intent by capturing user context, consent, and delegation.

4. Enforce policies at the point of use to prevent unauthorized actions.

5. Produce proof of control through detailed auditing and compliance measures.

HashiCorp Vault emerges as a critical tool for securing agentic AI workflows, offering identity-based controls, policy-based access, and robust reporting capabilities. Three use cases demonstrate how Vault can protect, manage, and audit AI agents across various scenarios, ensuring secure and compliant operations.

In conclusion, establishing consistent runtime security patterns is essential for organizations embarking on AI adoption. By implementing standardized security controls early on, organizations can build and scale agentic AI workflows securely and efficiently. To learn more about how HashiCorp Vault enables safe and scalable agentic AI adoption, watch the Agentic Runtime Security Explained video on the IBM Technology YouTube page or reach out to the HashiCorp team for tailored consultation.

For more Information, Refer to this article.