Title: Enhancing AI Security with HashiCorp Vault: A Deep Dive into Ariso.ai’s Encryption Strategy

In the fast-evolving world of artificial intelligence and machine learning, data security remains paramount. Ariso.ai, a leading provider of AI-driven productivity solutions, has taken a significant leap forward by enhancing its encryption strategies to safeguard sensitive information. This article explores how Ariso.ai utilized HashiCorp Vault’s advanced encryption capabilities to secure its multi-tenant AI assistant, Ari.

Ari, developed by Ariso.ai, is a context-aware AI assistant that seamlessly integrates with various business tools, including calendars and document management systems. The AI assistant is designed to understand a company’s workflow and priorities, delivering personalized insights and automating routine tasks. However, with the increasing volume of sensitive data handled by Ari, such as messages, meeting transcripts, and personal reflections, the need for a robust encryption solution became apparent.

The Challenge: Securing Sensitive Data

Before adopting HashiCorp Vault, Ariso.ai faced several encryption-related challenges. The company relied on application-level encryption with manually managed keys stored in environment variables. This approach had significant drawbacks, including:

- No Tenant Isolation: A single compromised key could expose data from all organizations using the platform.

- Lack of Key Rotation: Rotating keys required coordinated downtime and re-encryption, which was impractical.

- Absence of an Audit Trail: Security teams had no visibility into cryptographic operations.

- Key Sprawl: Each service managed its own keys independently, leading to operational complexity.

These issues posed unacceptable business risks, making it crucial to adopt a more sophisticated encryption strategy.

The Solution: Leveraging HashiCorp Vault’s Transit Secrets Engine

Ariso.ai evaluated several cloud-native Key Management Services (KMS) such as AWS KMS, Azure Key Vault, and Google Cloud KMS. However, none offered the specific capabilities needed, particularly encryption as a service with context-based key derivation and multi-cloud portability. HashiCorp Vault’s Transit Engine provided the comprehensive solution Ariso.ai required.

Architecture: Two-Layer Envelope Encryption

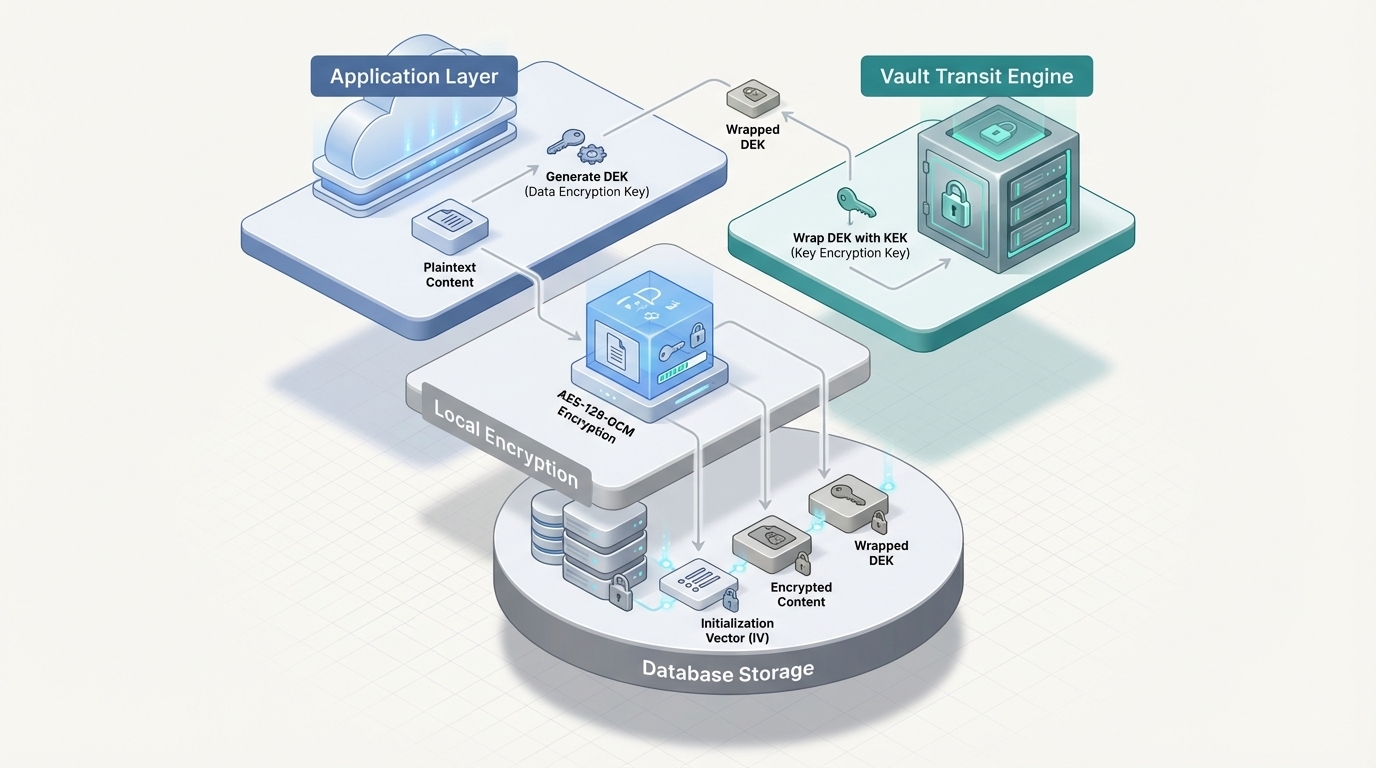

Rather than processing all data through Vault, which could create a bottleneck, Ariso.ai implemented a two-layer envelope encryption strategy:

- Data Encryption Key (DEK): A random AES-128-GCM key is generated per encryption context. The application uses this key to encrypt data locally, ensuring fast processing.

- Key Encryption Key (KEK): Managed by Vault’s Transit Engine, the KEK wraps the DEK, allowing it to be stored securely alongside the encrypted data. This separation ensures that Vault only handles small key payloads, maintaining low latency.

By adopting this approach, Ariso.ai achieved sub-millisecond Vault transit latency, with a median of 0.46ms and a 99th percentile of 0.63ms. Additionally, the platform maintained an 8:1 encrypt-to-decrypt ratio, confirming effective DEK caching. Importantly, there was zero plaintext in production, as all sensitive data was encrypted.

Key Derivation: Ensuring Multi-Level Isolation

Ariso.ai needed to implement cryptographic separation at three levels: organization, user, and session. Managing individual keys for each context would have been cumbersome, leading to tens of thousands of keys with complex lifecycle management. Vault’s Transit Engine, however, supports context-based key derivation, which provided the perfect solution.

Context-Based Key Derivation

Using a single master KEK with context-based key derivation, Ariso.ai could produce unique cryptographic keys for each context. This approach enabled the company to manage billions of unique keys without any key management overhead. Different contexts yield mathematically independent keys, all derived from one manageable master key.

For example, organization settings and meeting transcripts are shared across all users in an organization, while personal messages and reflections are private to individual users. Ephemeral conversations, on the other hand, become cryptographically inaccessible once a session ends, ensuring forward secrecy.

Performance at Scale: Caching Strategy

To maintain high performance, Ariso.ai implemented an in-memory DEK cache. This approach significantly reduced the need for network calls to Vault for every encrypt/decrypt operation, minimizing latency.

- On a cache hit (95.8% of operations), the DEK is already in memory, allowing encryption and decryption to occur locally at AES hardware speed.

- On a cache miss, a single Vault API call unwraps the DEK, which is then cached for future use.

The caching strategy was highly effective, with Vault transit operations averaging 0.47ms and 0.63ms at the 99th percentile. This performance ensured that Ariso.ai’s encryption layer added negligible overhead to database operations.

Results: Enhanced Data Protection and Security Posture

Through the adoption of HashiCorp Vault’s Transit Secrets Engine, Ariso.ai achieved comprehensive data protection:

- Data Protection Coverage: Sensitive data categories, such as organization data, user messages, and session data, were encrypted with context-based key derivation.

- Improved Security Posture: The platform achieved zero plaintext in production, multi-level cryptographic isolation, and forward secrecy. A complete audit trail ensured visibility into all cryptographic operations.

- Infrastructure Optimization: By choosing HCP Vault Dedicated, Ariso.ai avoided the operational burden of self-hosting, benefiting from Vault Enterprise features like performance replication and disaster recovery.

Lessons Learned and Best Practices

Ariso.ai’s journey with HashiCorp Vault offers valuable insights for other organizations seeking to enhance their encryption strategies:

Do’s

- Use Envelope Encryption: Local AES encryption with Vault-wrapped keys maintains low latency, regardless of payload size.

- Implement DEK Caching: An LRU cache with a 1-hour TTL reduced Vault API calls by 96%.

- Design Context-Based Key Derivation from the Start: Retrofitting isolation levels is more challenging than building them in from the beginning.

- Use Session-Scoped Encryption for Ephemeral Data: Provides forward secrecy without additional key management.

- Design for Key Rotation on Day One: Include KEK version tracking in your schema for zero-downtime rotation.

- Use HCP Vault: Unless you have a dedicated security infrastructure team, leveraging a managed service is beneficial.

Don’ts

- Don’t Send Large Payloads Directly to Transit: Use envelope encryption; Vault should only handle DEK wrapping.

- Don’t Cache Unwrapped DEKs Indefinitely: Balance performance with security via appropriate TTLs.

- Don’t Ignore Audit Logs: They provide a forensic trail for cryptographic operations.

- Don’t Hardcode Vault Tokens: Use dynamic authentication methods like Kubernetes auth or AWS IAM auth.

Migration Strategy

For teams adopting Vault encryption on existing data, Ariso.ai recommends a phased migration strategy:

- Schema Preparation: Add nullable encrypted JSONB columns alongside existing plaintext columns and deploy Vault-integrated application code.

- Dual-Write: Encrypt new data, writing it to both columns, and backfill existing records in batches.

- Read Migration: Prefer encrypted columns if populated, falling back to plaintext during the transition.

- Cleanup: Verify 100% encryption coverage, clear plaintext columns, and remove fallback code.

Conclusion

HashiCorp Vault’s Transit Secrets Engine provided Ariso.ai with a robust solution to encrypt large volumes of records daily across a multi-tenant platform. The combination of envelope encryption, context-based key derivation, and intelligent caching delivered strong security and production-grade performance.

By centralizing key governance and enforcing cryptographic isolation at the organization, user, and session levels, HCP Vault Dedicated mitigated risks while removing operational burdens. Ariso.ai’s case study serves as a valuable reference for multi-tenant applications processing sensitive data at scale, showcasing the benefits of a well-architected encryption strategy.

For more information on HashiCorp Vault’s capabilities, visit HashiCorp Vault.

For more Information, Refer to this article.